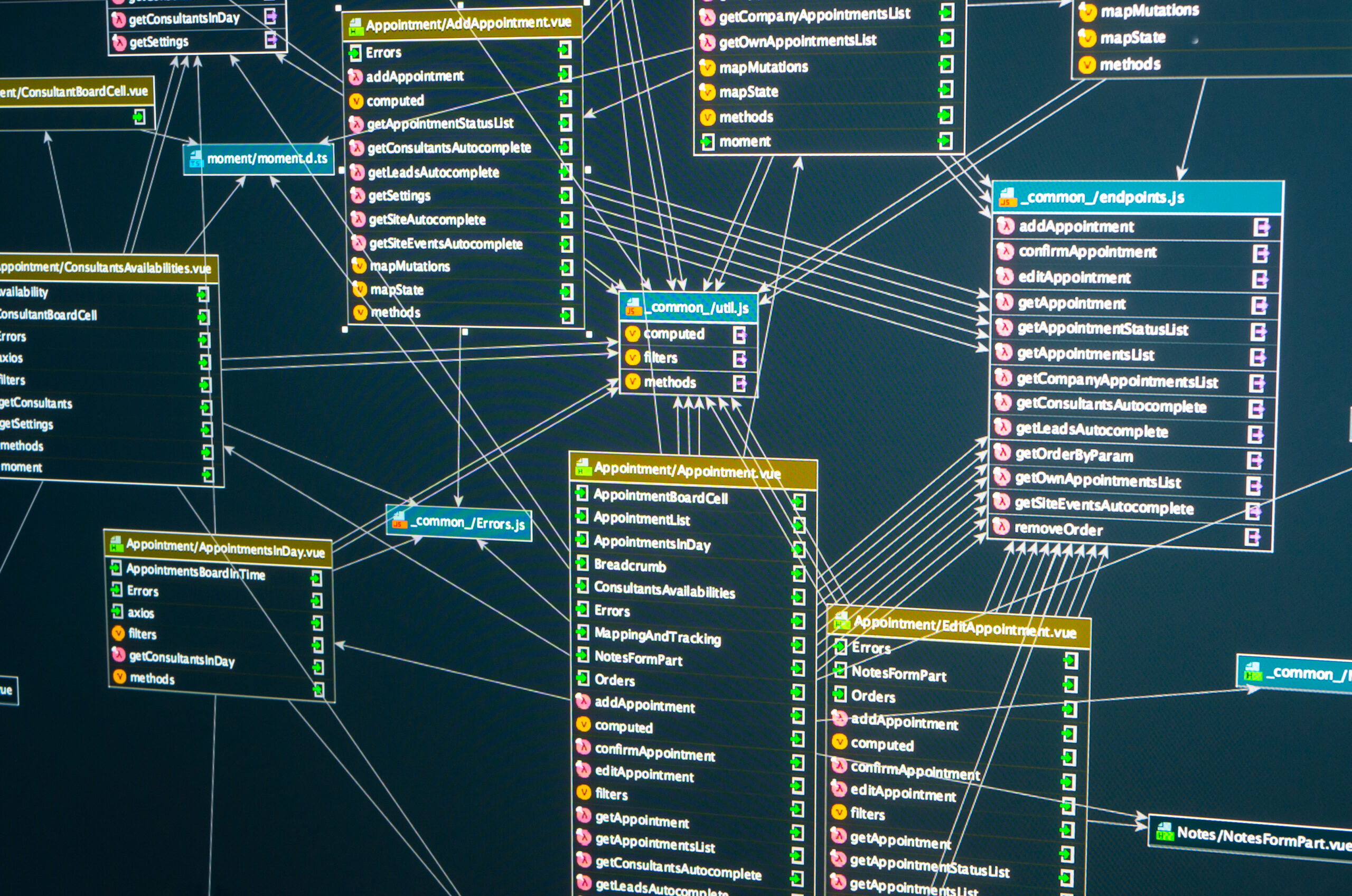

Most teams running ClickHouse at scale eventually run into the same set of issues with a Vector ClickHouse pipeline for observability data and the configuration patterns that keep it stable. Vector ships logs and metrics from edge agents to ClickHouse via its built-in clickhouse sink, with batching, retries, and backpressure handled at the agent. That single sentence hides a fair amount of detail, and the rest of this piece pulls those details apart so the levers and trade-offs are visible.

The most common version of the problem is straightforward: teams point Vector at ClickHouse with default sink settings, forget the buffer disk type, and lose data on restarts. That kind of issue rarely traces back to a single setting. It is usually a combination of schema, sort key, and a few small misconfigurations stacking on top of each other, and the path to fixing it starts with understanding the mechanics.

For teams running ClickHouse in production, the cost of getting a Vector ClickHouse pipeline for observability data and the configuration patterns that keep it stable wrong is felt in tail latency, in runaway memory grants, and in the hours operators spend chasing intermittent issues. Getting it right takes some up-front investment in measurement and a willingness to revisit defaults when the workload changes.

How it actually works

Before changing any setting, it helps to walk through what ClickHouse is actually doing under the surface. The behaviour described here is not specific to one release; the broad shape has held across recent versions, and the operational implications are the same on self-managed clusters and on managed offerings.

- Vector reads from sources (files, syslog, OTLP, Kafka), runs transforms, and exports through sinks.

- The clickhouse sink batches events into INSERTs over HTTP or TCP.

- Disk buffers persist data across restarts; memory buffers are smaller but lose data on crash.

- Compression and timestamp parsing happen in transforms before the sink.

- Backpressure from ClickHouse propagates to upstream sources; tune buffers accordingly.

Each of those steps has its own characteristic cost, and the slow ones tend to be the ones that show up in p95 and p99 latency. That is why the rest of this piece focuses on the levers that actually move those percentiles, rather than on micro-optimisations that look good in synthetic tests but rarely survive contact with production workloads.

Settings that actually matter

The configuration surface in ClickHouse is broad, and most of it does not need to be touched in a typical deployment. The settings below are the ones worth understanding because they shape behaviour directly under load. Defaults work for small workloads; the right values for production are usually different.

| Setting | Suggested value | Notes |

|---|---|---|

| buffer.type | memory | disk | Persistence vs speed. |

| batch.max_bytes | 10485760 | Per-batch upper bound. |

| batch.timeout_secs | 5 | Time-based flush. |

| compression | gzip | zstd | Reduce wire bytes. |

| encoding.codec | json | text | Match ClickHouse format. |

| request.concurrency | adaptive | Self-tunes to ClickHouse capacity. |

None of these are universal. The right number on a node with sixty-four cores and NVMe is not the right number on a smaller VM with attached storage, and the right number for an analytics workload differs from a streaming ingestion workload. The values above are starting points, not endpoints.

Configuration fragment

A small configuration fragment that captures the relevant settings is worth keeping nearby. It is not a complete configuration; it is the part that tends to change between defaults and a tuned cluster.

# vector.yaml excerpt

sinks:

ch:

type: clickhouse

inputs: [parsed_logs]

endpoint: http://ch-1:8123

database: logs

table: app_logs

compression: zstd

encoding:

codec: json

batch:

max_bytes: 10485760

timeout_secs: 5

buffer:

type: disk

max_size: 10737418240

when_full: drop_newest

Tuning approach that works in practice

Creating an Effective Vector ClickHouse Pipeline

The list below is the order most operators converge on when tuning a Vector and ClickHouse pipeline for observability data and the configuration patterns that keep it stable. It is not a recipe; the right answer depends on the workload. But it is a defensible sequence: each step is cheap to verify, and each one has a measurable effect when the change matters.

- Use disk buffers for any production agent; memory buffers lose data.

- Increase batch.max_bytes until ingest matches expected throughput.

- Enable compression unless the network is internal and bandwidth is free.

- Pin Vector versions in production; transforms can change behaviour subtly between releases.

Each change should be measured against the metrics that matter — usually p95 latency at a target throughput, plus query log statistics and CPU behaviour. Changes that do not move those numbers are not actually changes; they are configuration churn.

What to look at first

When something goes wrong with a Vector and ClickHouse pipeline for observability data and the configuration patterns that keep it stable, the first move is usually a handful of system table queries. The objects below are the ones that produce useful output fast, without needing a full monitoring pipeline to interpret.

| Object | What it shows |

|---|---|

| system.parts | Active and inactive data parts per table, with row counts, bytes on disk, and merge state. |

| system.query_log | Completed queries with duration, memory, rows read, and the user who ran them. |

Guardrails worth setting up

Tuning without monitoring is guesswork. The signals listed below are the ones that catch problems early enough to act on, and most production clusters end up alerting on a similar shortlist whether they planned to or not.

- Track Vector buffer utilisation.

- Alert on dropped events at any sink.

- Monitor ClickHouse insert errors that originate from Vector batches.

Pitfalls that show up repeatedly

The same handful of mistakes appears across cluster after cluster. Most of them are easier to avoid than to fix, and the cost of getting them wrong tends to compound — what starts as a small misconfiguration becomes a real incident weeks later when the workload grows.

- Memory buffer + restart = data loss. Use disk buffers.

- Sending one event per request; the sink should batch.

- Skipping transforms that parse timestamps; ingest then uses event_time = ingestion time.

None of those are exotic. They show up in code reviews, in postmortems, and occasionally in vendor support tickets, and the operational habit of catching them early is worth more than any single configuration change.

Frequently asked questions

A handful of questions come up every time this topic is discussed. The answers below are the ones that hold up across most production deployments; the exceptions are usually visible in the metrics.

Is HTTP or TCP better for the sink?

HTTP is simpler and works behind load balancers. TCP shaves a small amount of overhead.

Can Vector replace Filebeat / Fluentd / Fluent Bit?

For most pipelines, yes. Each tool has rough edges; choose by ecosystem.

Does Vector support OTLP?

Yes — OTLP source and sink are both available.

How do I handle multi-tenancy?

Per-source transforms route into different tables or databases.

How do I roll out config changes?

Vector validates configuration on start; deploy progressively rather than in one global push.

ClickHouse rarely operates in isolation. It sits inside a larger data platform with its own monitoring, deployment, and incident workflows, and the engine’s performance characteristics interact with those workflows in ways that are easy to miss. Treating ClickHouse as part of a system, rather than a standalone service, generally produces better outcomes.

Part count is a quiet failure mode: the cluster keeps working as parts accumulate, and then suddenly latency spikes or a merge thread saturates. Watching part count per partition and tying it to ingestion rate is a small habit that catches the problem long before it becomes an incident.

A baseline taken once and never refreshed is rarely useful for long. The values that define normal on a ClickHouse cluster shift as data grows, as queries are added, and as schema evolves. Periodically refreshing baselines and comparing to historical trends gives the team something concrete to react to when behaviour changes.

Behind every ClickHouse cluster there is a team that owns it, and the team’s habits matter as much as the configuration. Clear runbooks, clear ownership, and unambiguous SLOs do more for reliability than any single tuning decision, and they are what make tuning sustainable over time.

Configuration changes that are documented and reversible are easier to live with than ones that are not. Even small changes are worth recording with the date, the reason, and the before-and-after metric, because the same change is likely to come up again in a future incident or capacity review.

Hardware specifications change as nodes are replaced and infrastructure is upgraded. A configuration that fit a previous generation of disks or CPUs may underperform on the next, and revisiting tuning decisions when hardware changes is part of routine operations rather than an exceptional event.

Teams that want a deeper look at a Vector and ClickHouse pipeline for observability data and the configuration patterns that keep it stable can review ChistaDATA’s observability articles, or contact ChistaDATA about ClickHouse support for production engagements.

Putting it together

Teams that handle a Vector and ClickHouse pipeline for observability data and the configuration patterns that keep it stable well treat it as ongoing work, not a one-time configuration exercise. The defaults ClickHouse ships with are reasonable starting points but rarely the right answer for a specific workload, and the difference between a cluster that holds its SLOs and one that struggles is often the willingness to measure first and tune second.

The work is rarely finished, but it is also not as mysterious as it sometimes feels: a small number of mechanisms drive most of the behaviour, and the levers that matter are mostly the ones described above.