Building High Performance Lean Data Platforms – Archiving Data to ClickHouse

ClickHouse is a versatile database management system known for its real-time analytics capabilities. However, it offers more than just real-time analytics. ClickHouse can also serve as an archive store for transaction computing systems or relational database management systems (RDBMS). This is primarily due to its exceptional performance, scalability, and reliability. In particular, it can be utilized effectively as a ClickHouse as Archival Store, enhancing data retention strategies.

ClickHouse’s design and architecture make it well-suited for efficiently handling vast data. It employs columnar storage, which enables efficient compression and quick data retrieval. This makes it ideal for storing and querying large volumes of historical data.

In addition, ClickHouse’s scalability is impressive. It can handle massive workloads and scale horizontally by distributing data across multiple servers. This allows organizations to easily expand their data storage and processing capabilities as their needs grow.

Moreover, ClickHouse is renowned for its reliability. It offers features like replication, sharding, and fault-tolerant design, ensuring data durability and high availability. This makes it suitable for critical transaction computing systems requiring performance and data integrity.

By leveraging ClickHouse as an archive store for transaction computing systems or RDBMS, organizations can benefit from its exceptional performance in handling analytical queries while retaining historical data for compliance or reference purposes. It provides a cost-effective and efficient solution for managing large volumes of data over time.

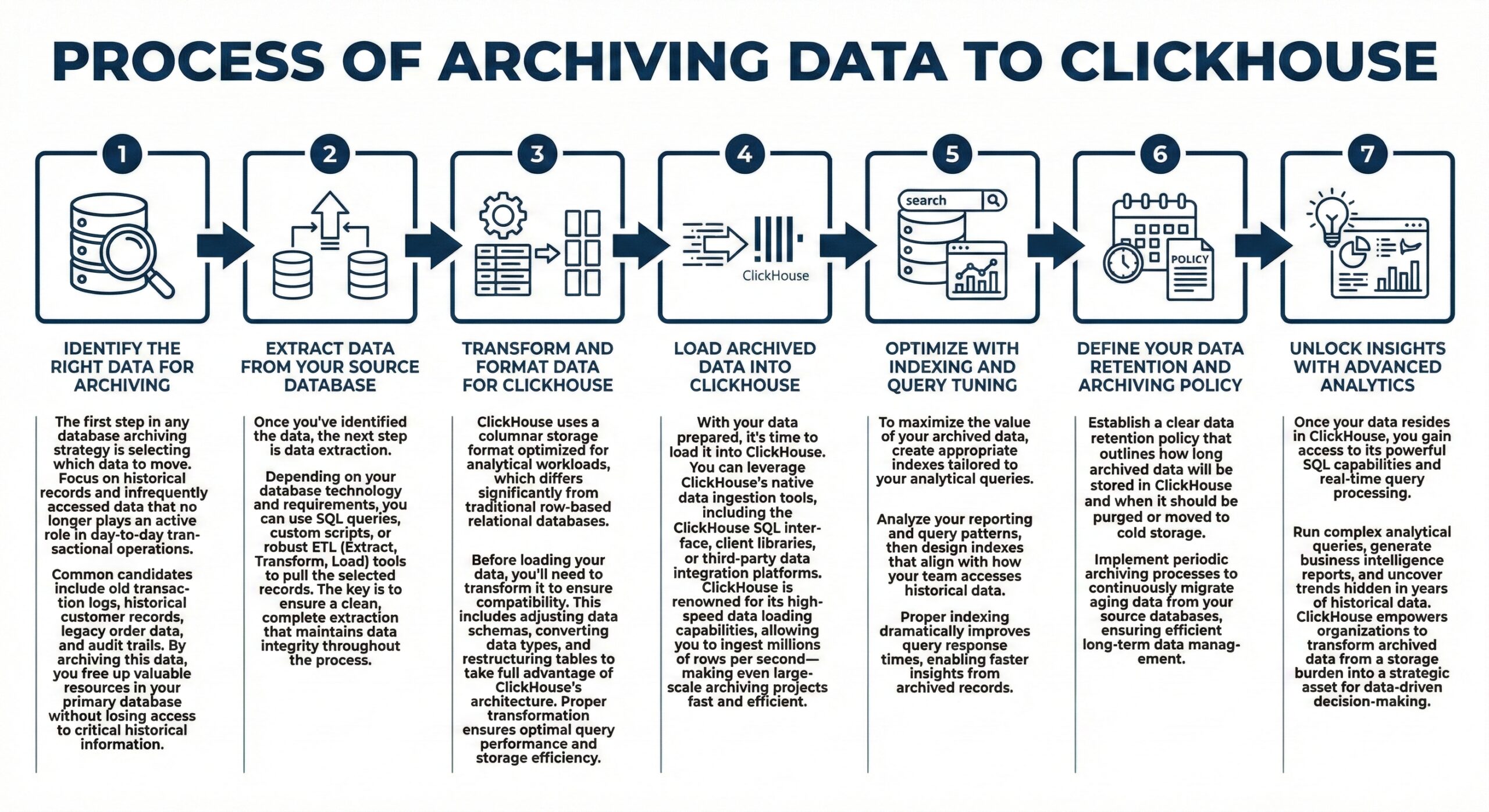

Process of Archiving Data to ClickHouse

Step 1: Identify the Right Data for Archiving

The first step in any database archiving strategy is selecting which data to move. Focus on historical records and infrequently accessed data that no longer plays an active role in day-to-day transactional operations. Common candidates include old transaction logs, historical customer records, legacy order data, and audit trails. By archiving this data, you free up valuable resources in your primary database without losing access to critical historical information.

Step 2: Extract Data from Your Source Database

Once you’ve identified the data, the next step is data extraction. Depending on your database technology and requirements, you can use SQL queries, custom scripts, or robust ETL (Extract, Transform, Load) tools to pull the selected records. The key is to ensure a clean, complete extraction that maintains data integrity throughout the process.

Step 3: Transform and Format Data for ClickHouse

ClickHouse uses a columnar storage format optimized for analytical workloads, which differs significantly from traditional row-based relational databases. Before loading your data, you’ll need to transform it to ensure compatibility. This includes adjusting data schemas, converting data types, and restructuring tables to take full advantage of ClickHouse’s architecture. Proper transformation ensures optimal query performance and storage efficiency.

Step 4: Load Archived Data into ClickHouse

With your data prepared, it’s time to load it into ClickHouse. You can leverage ClickHouse’s native data ingestion tools, including the ClickHouse SQL interface, client libraries, or third-party data integration platforms. ClickHouse is renowned for its high-speed data loading capabilities, allowing you to ingest millions of rows per second—making even large-scale archiving projects fast and efficient.

Step 5: Optimize with Indexing and Query Tuning

To maximize the value of your archived data, create appropriate indexes tailored to your analytical queries. Analyze your reporting and query patterns, then design indexes that align with how your team accesses historical data. Proper indexing dramatically improves query response times, enabling faster insights from archived records.

Step 6: Define Your Data Retention and Archiving Policy

Establish a clear data retention policy that outlines how long archived data will be stored in ClickHouse and when it should be purged or moved to cold storage. Implement periodic archiving processes to continuously migrate aging data from your source databases, ensuring efficient long-term data management.

Step 7: Unlock Insights with Advanced Analytics

Once your data resides in ClickHouse, you gain access to its powerful SQL capabilities and real-time query processing. Run complex analytical queries, generate business intelligence reports, and uncover trends hidden in years of historical data. ClickHouse empowers organizations to transform archived data from a storage burden into a strategic asset for data-driven decision-making.

Unleashing Data-driven Insights in Banking: ClickHouse for Real-Time Analytics and Efficient Archive Storage

In the banking and financial services sector, ClickHouse can be utilized to streamline data management processes and improve decision-making capabilities. One practical use case involves utilizing ClickHouse for real-time analytics and archive storage.

Real-Time Analytics: By implementing ClickHouse, banks can analyze and gain valuable real-time insights from their transactional data. They can monitor customer transactions, detect fraudulent activities, identify patterns for risk assessment, and generate customized reports and dashboards for timely decision-making. ClickHouse’s performance and scalability enable fast querying and analysis of large volumes of data, empowering banks to make informed and proactive business decisions.

Archive Storage: Banks must store transactional data for compliance and historical reference. ClickHouse’s columnar storage and compression capabilities make it ideal for efficiently storing and managing vast amounts of historical data. Banks can use ClickHouse as an archive store to ensure data integrity, scalability, and reliability while optimizing storage costs. They can retrieve archived data when needed, perform historical trend analysis, and meet regulatory requirements effortlessly.

Overall, integrating ClickHouse into banking and financial services businesses allows them to harness the power of real-time analytics for operational insights and leverage reliable archive storage for compliance and historical purposes. This enables banks to enhance their data management capabilities, improve decision-making processes, and ensure regulatory compliance cost-effectively.

Conclusion

In summary, ClickHouse’s utilization extends beyond real-time analytics. Its performance, scalability, and reliability make it a powerful choice as an archive store for transaction computing systems or RDBMS, enabling organizations to effectively achieve analytics and long-term data storage goals.

To know more about Clickhouse as an Archival Store, do read the following article:

Archiving Data From PostgreSQL to ClickHouse